As I explained then, all someone has to do to participate is read the relevant sections of a case that is cited by another case and then click a radio button to indicate whether the reference wasPositive,Referencing,Distinguishing orNegative. Participants earn points and the points lead to prizes, as well as a listing on the leaderboard.

Last week, I spoke toPablo Arredondo, vice president, legal research at Casetext. He told me that in just the month or so since the initiative launched, it has generated more than 67,000citator entries and has the participation of500 students from 90 law schools.

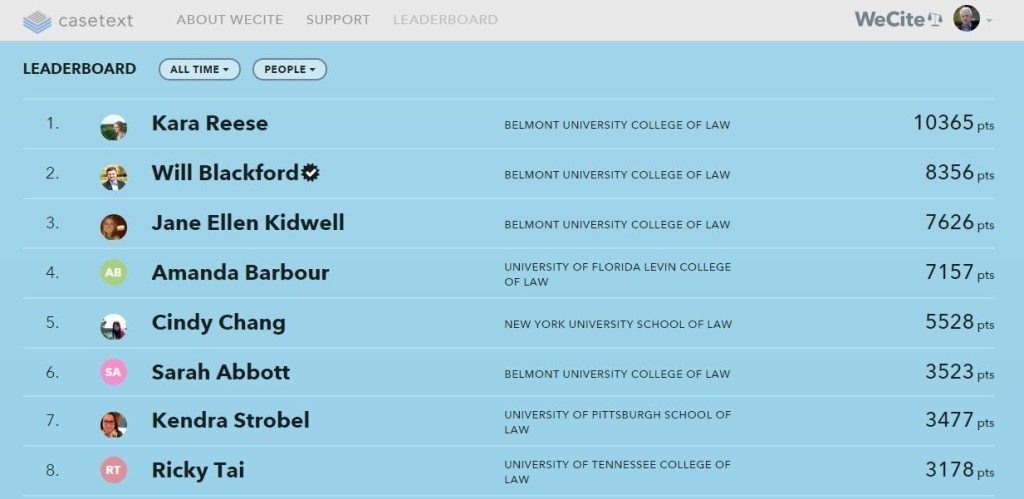

If you’re wondering which students from which law schools are participating, the leaderboard shows the top names and law schools and can be sorted by either. So far, the top law school in points accumulation is Belmont University College of Lawin Nashville, Tenn.

Some of you are probably thinking, “How reliable are the judgments of law students when it comes to citation authority?” Casetext has thought about that too. Arredondo says he is in the process ofassembling a group of law librarians who will serve as the final arbiters of the category decisions.

When Westlaw first created KeyCite, Arredondo says, it used algorithms to analyze citations and suggest their treatment of a case, and then an editor would confirm or deny the algorithm’s suggestion.

Casetext is employing a similar process, except that it is using law students instead of an algorithm and law librarians instead of editors.

So far, WeCite’s citator covers every outgoing citation in a Supreme Court decision for the last 20 years. Casetext has just started adding citator treatment for federal appellate cases and will eventually add state supreme and appellate court cases.

Casetext also plans to make the citator entries to Cornell’s Legal Information Institute to make available as a bulk download to anyone who wants to use the data.

“I have had law students tell me it’s addictive,” Arredondo says. “And they like the competition aspect.”

Robert Ambrogi Blog

Robert Ambrogi Blog