A study released this week pitted two legal research platforms against each other, Casetext CARA and Lexis Advance from LexisNexis, and concluded that attorneys using Casetext CARA finished their research significantly more quickly and found more relevant cases than those who used Lexis Advance.

The study, The Real Impact of Using Artificial Intelligence in Legal Research, was commissioned by Casetext, which contracted with the National Legal Research Group to provide 20 experienced research attorneys to conduct three research exercises and report on their results. Casetext designed the methodology for the study in consultation with NLRG and it wrote the report of the survey results.

This proves, says Casetext, the efficacy of its approach to research, which — as I explained in this post last May — lets a researcher upload a pleading or legal document and then delivers results tailored to the facts and legal issues derived from the document.

“Artificial intelligence, and specifically the ability to harness the information in the litigation record and tailor the search experience accordingly, substantially improves the efficacy and efficiency of legal research,” Casetext says in the report.

But the LexisNexis vice president in charge of Lexis Advance, Jeff Pfeifer, took issue with the study, saying that he has significant concerns with the methodology and sponsored nature of the project. More on his response below.

The Study’s Findings

The study specifically concluded:

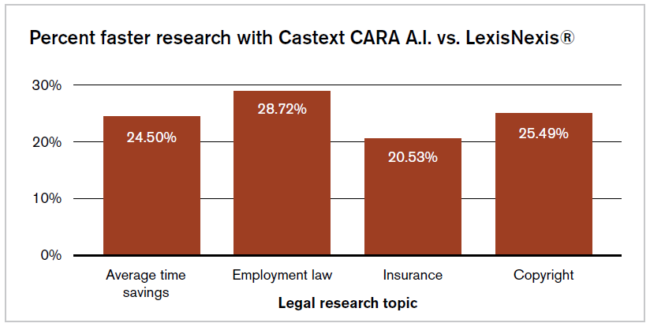

- Attorneys using Casetext CARA finished the three research projects on average 24.5 percent faster than attorneys using traditional legal research. Over a year, that faster pace of research would would save the average attorney 132 to 210 hours, Casetext says.

- Attorneys using Casetext CARA found that their results were on average 21 percent more relevant than those found doing traditional legal research. This was true across relevance markers: legal relevance, factual relevance, similar parties, jurisdiction, and procedural posture.

- Attorneys using CARA needed to run 1.5 searches, on average, to complete a research task, while those using LexisNexis needed to run an average of 6.55 searches.

- Nine of the 20 attorneys believed they would have missed important or critical precedents if they had done only traditional research without also using Casetext CARA.

- Fifteen of the attorneys preferred their research experience on Casetext over LexisNexis, even though it was their first experience using Casetext.

- Every attorney said that, if they were to use another research system as their primary research tool, they would find it helpful to also have access to Casetext.

Study Methodology

The attorneys who performed the research are all experienced in legal research and have on average 25.3 years in the legal profession, the report says. They were each given a 20-minute training in using Casetext CARA. They were given a brief introduction to LexisNexis, but their familiarity with that platform “was presumed.”

The attorneys were given three research exercises, in copyright, employment and insurance, and told to find 10 relevant cases for each. They were randomly assigned to complete two exercises using one platform and one using the other, so that roughly the same number of exercises were performed on each platform.

With each exercise, they were given litigation documents from real cases (complaints or briefs) and were asked to review and familiarize themselves with those materials. They were then given specific research tasks, such as “find ten cases that help address the application of the efficient proximate cause rule discussed in the memorandum in support of the motion for summary judgment.”

When researchers used CARA, they were able to upload the litigation materials. The study says that some researchers using Casetext were given sample search terms, but that most formulated their own search terms.

The researchers were told to track how long it took to perform each research assignment and how relevant they believed each case result to be, and to download their research histories. There were then asked a series of survey questions about their overall impressions of their research experiences.

Casetext then compiled all the information and prepared the report.

Lexis Raises Concerns

Pfeifer, who as chief product officer, North America, oversees Lexis Advance, expressed concern that the survey report failed to fully disclose the relationship between Casetext and NLRG. LexisNexis provided me with the following quotation from John Buckley, president of NLRG:

Our participation in the study primarily involved providing attorneys as participants in a study that was initially designed by Casetext. We did not compile the results or prepare the report on the study—that was done by Casetext.

Pfeifer also raised concerns about the study methodology. “The methods used are far removed from those employed by an independent lab study,” he said. “In the survey in question, Casetext directly framed the research approach and methodology, including hand-picking the litigation materials the participants were to use.”

Finally, Pfeifer noted that participants were trained on Casetext prior to the exercise, but not on Lexis Advance. “With only a brief introduction to Lexis Advance, it was presumed that all participants already had a basic familiarity with Lexis Advance and all of its AI-enabled search features.”

“From the limited information presented in the paper, the actual search methods used by study participants do not appear to be in line with user activity on Lexis Advance,” Pfeifer said. “References to ‘Boolean’ search is not representative of results generated by machine learning-infused search on Lexis Advance.”

Casetext Responds

During a phone call yesterday, Casetext CEO Jake Heller and Chief Legal Research Officer Pablo Arredondo defended the study.

“We think this is pretty darn neutral,” Heller said. “We gave it to them [NLRG] and they ran with it.”

Heller said that Casetext and NLRG worked collaboratively to design the methodology and that NLRG gave a lot of feedback in setting up the study.

I asked them why the study singled out LexisNexis for comparison and did not include other legal research services, and particularly why they did not include the new Westlaw Edge. They said that many legal professionals view Westlaw and LexisNexis interchangeably, and that their goal was to demonstrate how they stacked up against this traditional research duopoly.

Bottom Line

When a tobacco company funds research on the health effects of cigarette smoking, it doesn’t matter what the research finds. No matter how it turns out, the study is tainted by the source of the dollars that paid for it.

That’s my problem with this study. I’m a fan of Casetext’s CARA. When I tested it for myself in May, I was impressed, writing:

In my initial testing, the addition of CARA’s AI to a query makes a powerful combination, delivering results that much more closely matched my facts and issues.

But this study is tainted by Casetext’s funding of it and control over it — down to providing the research issues and materials and even suggesting search terms. That does not mean it is wrong. It just means there is a big question mark hovering over it.

But here, Casetext’s Arredondo gets the final word. Because when I raised that issue, here is what he said: “The best study would be for an attorney to sign up for a free trial of CARA and see for themselves.”

So if, like me, you’re a skeptic by nature, give it a try.

[Side note: Coincidentally, my new LawNext podcast recently featured episodes with both Heller and Arredondo of Casetext and Pfeifer of LexisNexis. Give them a listen to hear more about their work.]

Robert Ambrogi Blog

Robert Ambrogi Blog