“What if legal research was more human, more like a conversation, the kinds we have among ourselves?”

With that tantalizing query, Serena Wellen, senior director of research information at LexisNexis, revealed during a Legalweek media briefing that the legal research platform Lexis Advance will soon include chatbots to help guide users in their research.

“Our goal is to make legal research more guided in a more conversational experience,” Wellen said.

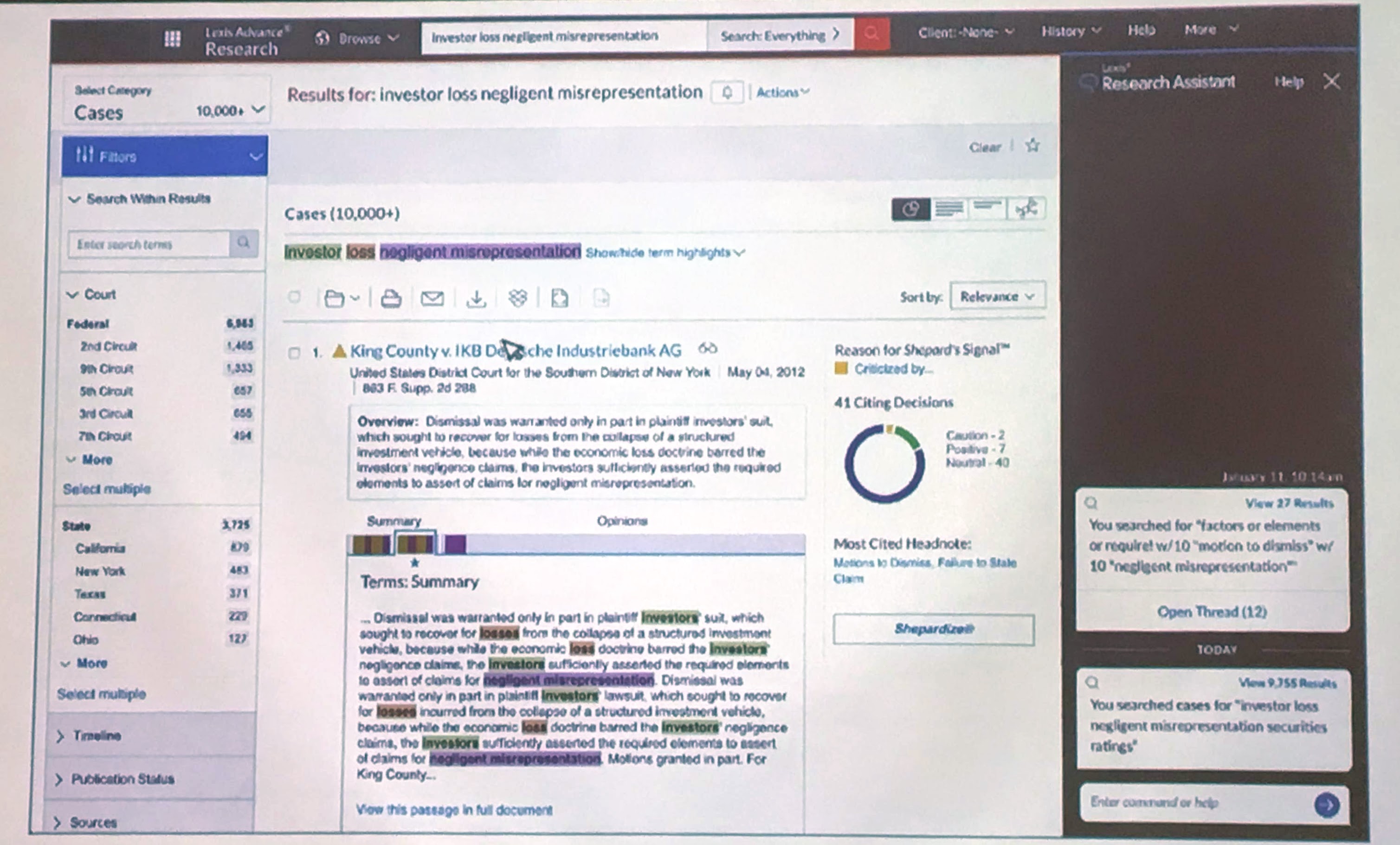

Called Lexis Research Assistant, the bot is slated to be released within the Lexis Advance platform within the next few months. Eventually, users will be able to interact with multiple sub-bots for various tasks related to their research workflow.

During a brief demonstration, Wellen showed that the bot is activated by clicking an icon in Advance. That opens an activity stream along the right side of the screen showing your recent research activity. Each bubble in the stream is a research thread you performed. All your work is saved as a thread within the bot. At any time, you can click a thread to engage conversationally with the bot.

Wellen showed some of the ways this could be used:

- While performing research, you are interrupted by a call and lose your train of thought. Click on the bot to pick up where you were.

- A few days later, you enter a search query. The bot recognizes that you have searched on this topic before. It asks if you want to leverage that past work. It might also suggest filters based on your recent activity, such as limiting your search to a particular jurisdiction.

- A few months later, you simply ask the bot, “Help me understand this topic.” The bot may offer a suggestion in Lexis Answers. You might then ask if you’ve researched this area before. It can tell you if you have, show you that research, and even tell you if you saved any documents in that prior research.

Eventually, this simple bot will evolve into multiple sub-bots, Wellen said, making it easier for researchers to access what they need more naturally within their workflow.

“Conversational search is the next big breakthrough in research,” she said.

Not Clippy

In a separate conversation at Legalweek, I learned more about the bot from Jeffrey S. Pfeifer, vice president and chief product officer, North America, at LexisNexis, and Nicholas Reed, the Ravel Law cofounder who is now senior director of product and strategy at LexisNexis.

Pfeifer said that he sees two key use cases for the bot. One is when the researcher is exploring an unfamiliar area or topic of law. The bot can be like an electronic mentor, guiding the researcher to the sources people typically look at for that topic.

The other use case is when revisiting prior research. Users’ recall of their prior research is typically low, Pfeifer said. The bot can present it back to them, pointing out that, three months ago, they did similar research, and offering to show it to them again. Also, the bot will get better over time at predicting a user’s intent as the user interacts with the system.

“We see in the future an interaction with Lexis Advance that is highly conversational,” Pfeifer said. “You ask a question, we present results. The interaction becomes more human-like.”

Pfeifer described the bot as serving as an “early intercept” in a research session. When a researcher enters a query, the bot might ask whether the user is looking for a general overview of the topic or specific case law. Based on the user’s answer, results might show either a Lexis Practice Advisor topic or a case list.

Right about now, some of you reading this post might be having flashbacks to Clippy, Microsoft’s much-maligned, animated office assistant.

But Lexis Research Assistant won’t be Clippy, Pfeifer said. It will appear and disappear in a way that is not intrusive and that is designed to be highly useful to the workflow activity. Users who have tested it so far have reacted well to it, he said.

“Users don’t want it to be intrusive. They want to see it when they need it.”

LexisNexis is also experimenting with voice interaction, but that is farther down the development path.

Robert Ambrogi Blog

Robert Ambrogi Blog